48hr Challenge

SUEDE Designathon 2025

Spot.

Ai-powered fact-checking assistant for medical misinformation on social media

This challenge was hosted by the SUEDE Society at the University of Sydney. My team of five, comprising students from diverse design disciplines and universities, collaborated effectively despite not knowing each other beforehand.

I initiated our research on a FigJam board and contributed to ideation, qualitative interviews, and research on the statistics and feasibility of our concept. I later refined the UI design for my portfolio.

Click here to view the original submissionTHE DESIGNATHON CHALLENGE

"Australians are heavy digital users, but many feel unprepared in judging, questioning, or resisting the online content shaping their lives. Digital literacy should not feel like a lesson or a chore, but rather a part of daily life. The challenge is to design a digital solution that makes critical and mindful digital engagement effortless in routine contexts."

PROBLEM SPACE

Out of the broader challenge, we chose to address the specific problem of medical misinformation.

Short-form platforms are filled with “health hacks” that replace professional advice for those with limited healthcare access. Influencers often have financial motives, and relatable content can prompt action regardless of truth. The challenge is not just misinformation; it’s helping people act on reliable knowledge.

USER NEEDS

Social media makes access to a variety of information easier but trust is harder than ever. To help users identify misinformation, they need clarity, awareness, and trustworthy communication. This means simple cues that show what's credible and what's not, tools that flag when content is playing on emotions, and content that feels engaging.

Our research identified three user needs: fact-checking misperceptions, finding credible sources, and awareness of bias.

IDENTIFIED INSIGHTS

From interviews and observations, our findings revealed three key themes:

• Users value peer support, but also distrust community-driven platforms.

• Comfort depends on how clear and accessible content feels. Users want simple but accurate content.

• People trust evidence-based verification by checking across multiple sources. This shows the solution isn’t just having more information, but making it clear, credible, and confidence-building.

PROBLEM STATEMENT

From key themes, we were able to produce a revised problem statement:

How might we support social media users in critically assessing medical information by strengthening digital media literacy, ensuring information is accessible and accurate, and addressing the tension between peer support and trust in credible sources?

TARGET AUDIENCE

Our target users are Australians with limited access to healthcare who often turn to social media for quick medical advice.

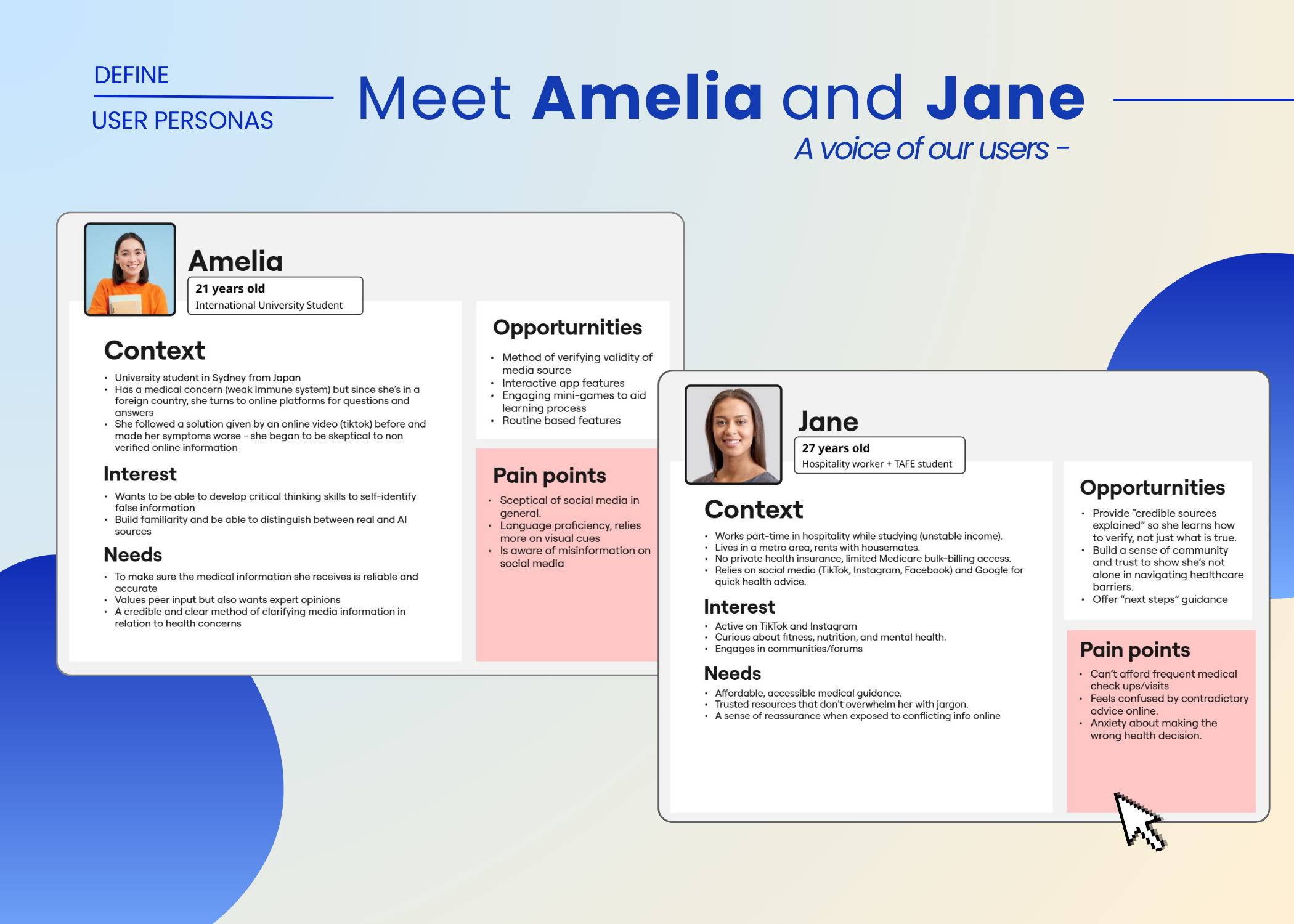

To better understand these users, we developed two personas:

• Amelia, a university student from Japan, struggles with verifying medical information and language barriers but values both peer and expert input.

• Jane, a TAFE student without private insurance, relies on influencers and feels anxious about conflicting advice.These personas highlight how an AI-powered fact-checking tool can support users like Amelia and Jane in evaluating medical content and recognising signs of misinformation.

FEASIBILITY

To assess the feasibility of our idea, we first explored how AI verifies information.

Our verification approach builds on advances in natural language processing (NLP) and large language models (LLMs), which can analyse and fact-check content at scale.

USER TESTING

For testing, we used a think-aloud protocol and made quick changes using an agile approach. Users told us that too many icons were overwhelming, and four color categories felt contradictory. So we made changes to simplify the design.

FINAL PROTOTYPE - VIDEO ANALYSIS

When users come across medical information online and want to verify its accuracy, they can share the video directly to Spot through the platform’s share function. This seamless entry point makes fact-checking effortless and integrated into their normal social media flow.

Users aren’t required to sign up unless they wish to save their fact-check history, helping the experience feel lightweight and non-intrusive. Once shared, Spot scans the video and generates a Credibility Report Summary.

The report includes an overall credibility score displayed through a simple Credibility Meter, offering a quick visual of trustworthiness. It highlights red flags and green flags with explanations for each of them. They can also see relevant credible sources and repeated content created by others. This can empower users to use their critical thinking skills to verify claims independently.

FINAL PROTOTYPE - HOME PAGE

To maintain a consistent brand identity, each page uses the same blue gradient. On the home page, a blue box highlights the option to paste a media link or share directly from social media, giving users flexibility especially if they have privacy concerns. On this page users can also check current or recent medical claims circulating online and learn tips and tricks to spot misinformation themselves.

FINAL PROTOTYPE - SEARCH, HISTORY & PROFILE PAGE

The search page lets users explore online content with a credibility score of 90 or higher. This feature helps users discover trustworthy videos or posts, reinforcing positive habits of following evidence-based information rather than sensationalized claims. The search history page involves a sign up as users need to create an account to use this feature. It allows users to access their previous searches. Together, these pages create a seamless experience. Users can upload content, learn from daily tips, explore high-credibility media, and track their scanning history all in one lightweight, educational, and empowering app.

FINAL PROTOTYPE DEMO

Final user flow:

1. Onboarding

2. Appropriate popups

3. Animated loading screens

4. In-depth analysis

5. Home page

6. Search page

7. Search history page

8. Profile page